The global race for artificial intelligence supremacy is being fought on a battlefield made of silicon. As models like GPT-4 and Claude 3 become household names, the underlying hardware—specifically AI chips training vs inference—has become the most important metric for tech investors to track.

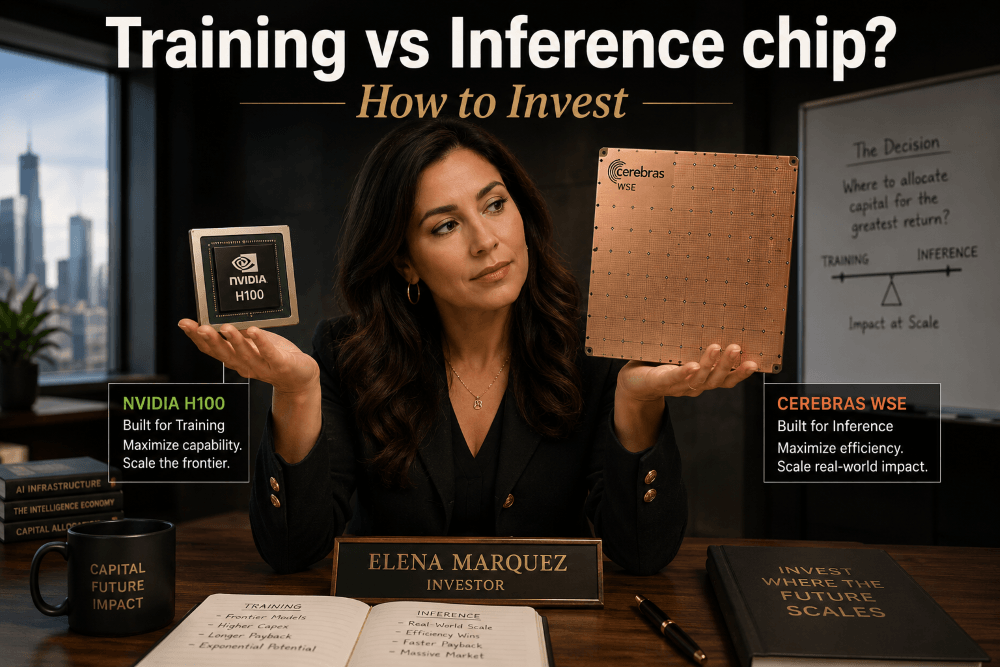

Not all chips are created equal. The hardware required to teach an AI how to “think” is fundamentally different from the hardware required to let that AI answer a user’s question. Understanding this distinction is the key to identifying which companies will lead the next decade of market growth.

The Fundamental Split: Training vs. Inference

To invest successfully in semiconductors, you must distinguish between the two phases of an AI’s lifecycle. Training is the creation phase, while inference is the application phase.

Training: The Computational Brute Force Training is the “education” of the AI. It requires feeding trillions of data points into a network and asking the computer to find patterns. This process is incredibly resource-intensive and requires chips that can handle massive parallel calculations simultaneously.

- Goal: High precision and massive throughput.

- Duration: Can take weeks or months on thousands of linked GPUs.

- Primary Drivers: Data center expansion and frontier model development.

Inference: The Scalable Reality Inference is what happens when you use AI. Every time you ask a chatbot a question, the model is “inferring” the correct result based on its training. This requires speed (low latency) and efficiency.

- Goal: Fast response times and low power consumption.

- Scale: Happens billions of times per day across the globe.

- Primary Drivers: Consumer adoption and software integration.

Key Players: Nvidia, Cerebras, and the Cooling Giants

While Nvidia has dominated the early stages of the AI boom, the market for AI chips training vs inference is diversifying as specialized needs arise.

Nvidia & Cerebras: The Training Powerhouses Nvidia’s H100 and B200 chips are the gold standard for training. However, Cerebras takes a disruptive approach with its Wafer-Scale Engine (WSE), using the entire wafer as one giant processor to eliminate the lag that occurs when Nvidia chips “talk” to each other.

Vertiv (VRT): The Infrastructure Play As an investor, you cannot ignore the heat. Training clusters generate so much thermal energy that air cooling is no longer enough. Vertiv (VRT) is the leader in liquid cooling and power infrastructure. Without Vertiv’s CDUs (Coolant Distribution Units), the massive training clusters built by Nvidia and Cerebras would quite literally overheat. VRT represents a “picks and shovels” play on the physical survival of AI hardware.

Groq and AMD: The Inference Contenders Companies like Groq are gaining traction in the inference market. Their LPUs (Language Processing Units) are designed specifically for the high-speed requirements of Large Language Models. Meanwhile, AMD’s MI300 series is positioned as a cost-effective alternative for enterprises that want to run inference at scale.

Investment Opportunity Analysis

As an investor, the biggest opportunity is currently shifting. While the “Training” phase drove Nvidia’s initial surge, the “Inference” phase is where the long-term volume lies. As AI becomes embedded in every smartphone (Edge AI) and app, the demand for power-efficient inference chips and the infrastructure to cool them will explode.

Top Tiers for 2026:

- Growth: Specialized inference startups (like Groq) or Vertiv (VRT) for thermal management infrastructure.

- Value: Legacy giants like AMD and Intel catching up on price-per-watt.

- Safe Bet: Nvidia’s continued dominance through software ecosystem lock-in.

Frequently Asked Questions

1. Why can’t the same chip do both? Technically, they can. Nvidia GPUs do both well. However, specialized inference chips are much cheaper and use less power, making them better for mass-scale consumer applications.

2. Why is Vertiv (VRT) mentioned alongside chip makers? Chips are useless if they melt. Vertiv provides the liquid cooling necessary for training chips (like those from Nvidia and Cerebras) to function at peak performance.

3. Is Cerebras a public company? Cerebras is currently private but has been the subject of significant IPO rumors. Investors should watch for their entry into public markets.

Other Stock Market blog posts

Leave a Reply